Most of the attention in AI has gone to training. Bigger models, bigger data centers, bigger funding rounds.

But one of the most important shifts in the market is happening after the model is trained.

That shift is inference, and it is creating a huge wave of demand for flexible GPU infrastructure.

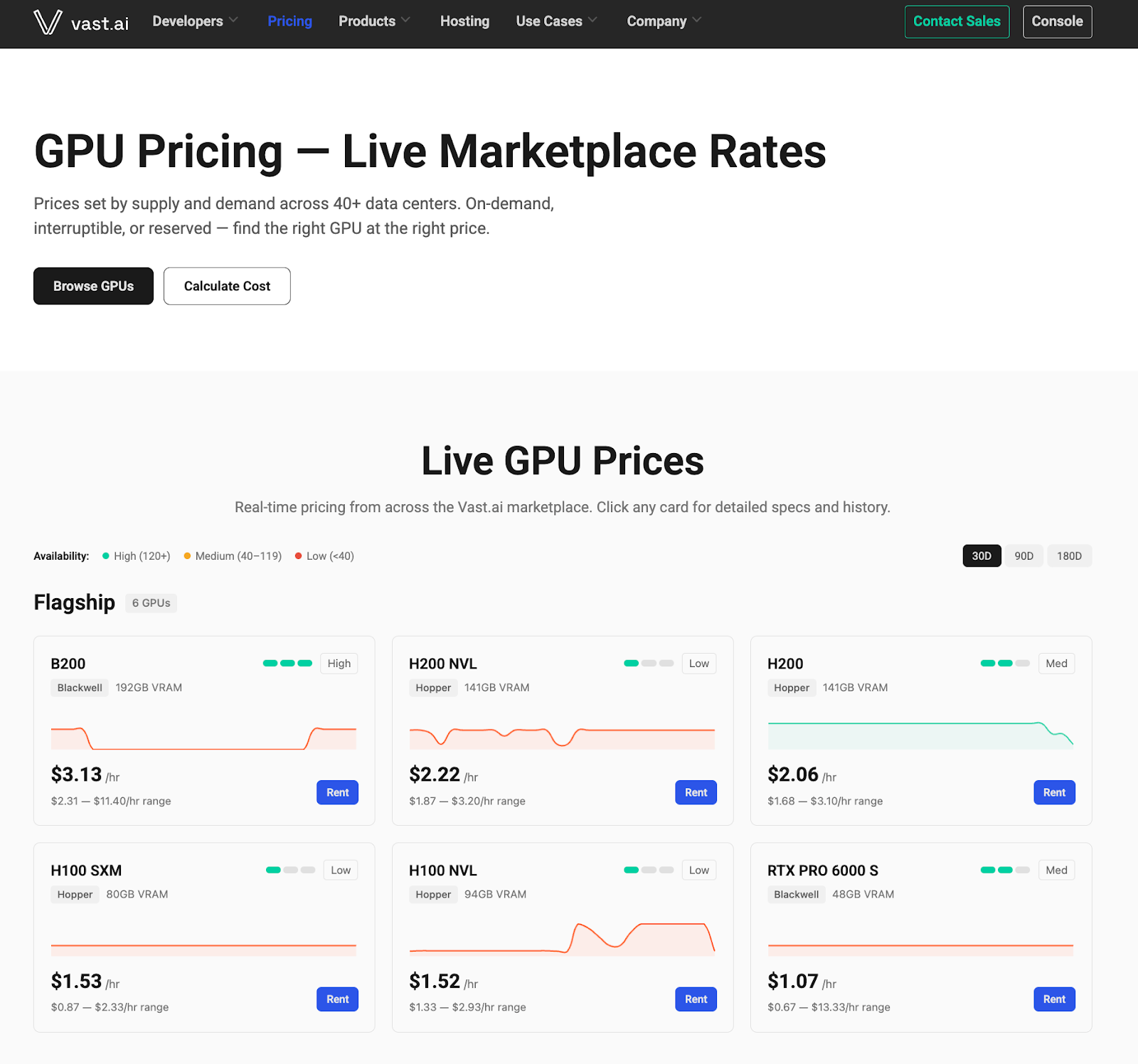

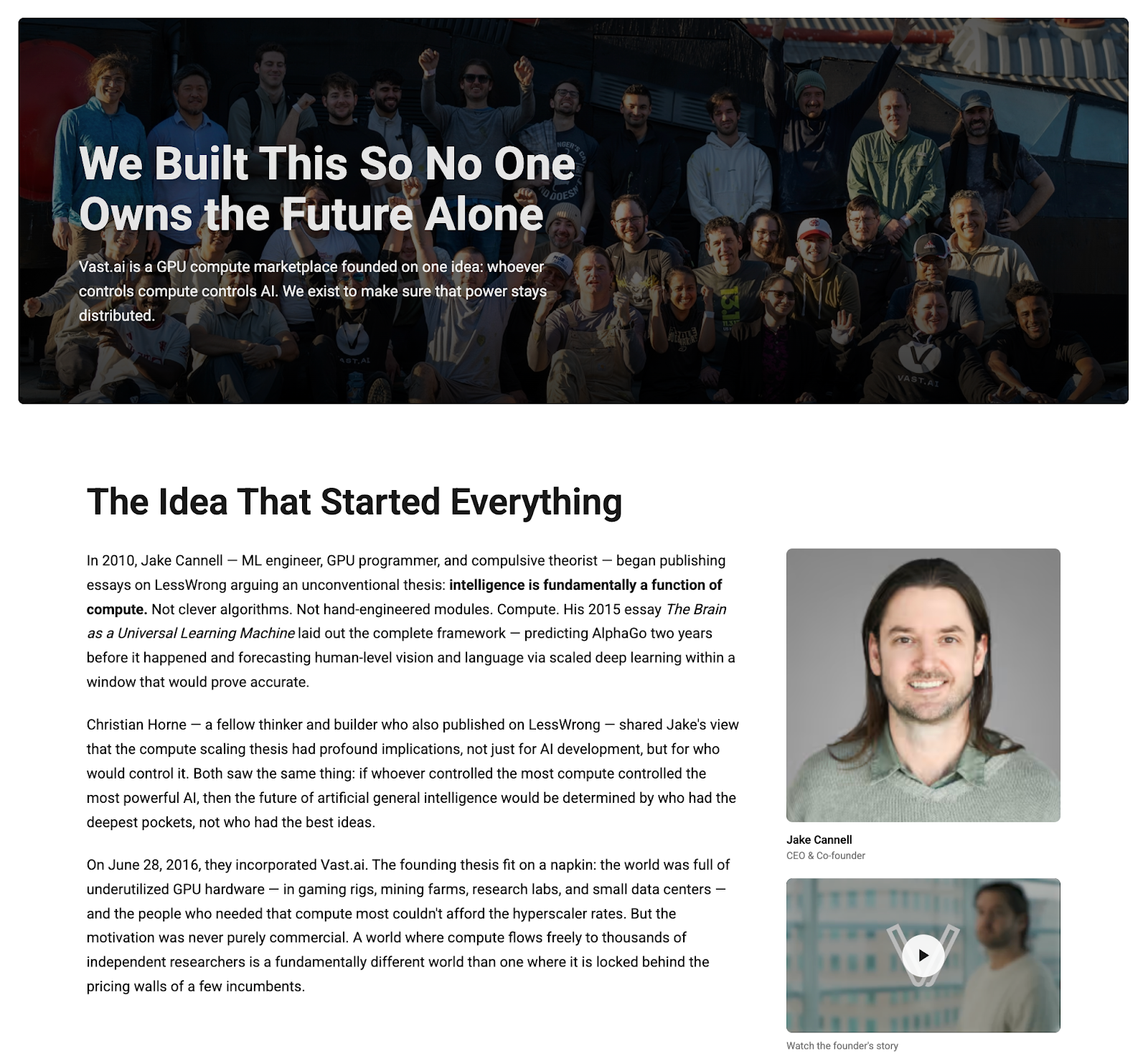

Vast.ai is one of the clearest examples of how big that opportunity has become. The company has built a marketplace for on-demand, low-cost GPUs and now powers workloads for teams all over the world. With more than 20,000 GPUs on the platform and rapid signup growth, Vast.ai is benefiting from a market that wants cheaper, faster, and more flexible access to compute than traditional cloud providers usually offer.

What makes the story especially interesting is that this is not just a hardware story. It is a software story, a marketplace story, and increasingly an org design story too.

Here are the biggest ideas behind Vast.ai’s rise and what they reveal about where AI infrastructure is heading.

Why Inference Is Fueling the Next AI Boom

For the last few years, the AI conversation centered on training. Companies spent massive amounts of capital building clusters to create frontier models. That part is still happening, but training by itself does not create value until people actually use the model.

That is where inference comes in.

Inference is the ongoing cost of running a model so people can interact with it. Every prompt, response, image generation request, video render, or AI workflow depends on inference happening somewhere on a GPU.

That demand is growing fast for a few reasons.

First, more products are becoming AI-native. Second, more people can now build with AI, even without traditional engineering skills. And third, entirely new use cases are opening up, from private model deployment to fine-tuning on proprietary data to compute-heavy video generation.

As inference gets cheaper, usage tends to increase rather than level off. That creates a powerful feedback loop. Lower cost makes new use cases viable. New use cases bring in more users.

This helps explain why GPU demand feels broader now than it did in earlier AI waves. The market is no longer limited to a narrow group of technical builders. The user base is expanding into a much larger group of people who can create with prompts, workflows, and AI tooling.

Inference Explained in Plain English

A simple way to think about it is this: training creates the model, but inference makes the model usable.

Training is the heavy production step. It takes massive infrastructure, tightly connected GPU clusters, and long runtimes. The output is a trained model.

Inference happens when that model is loaded into GPU memory and made available for interaction. If the model is just sitting as a file, nobody can talk to it. Once it is loaded and running on a GPU, it becomes interactive.

That means every time someone uses ChatGPT, Claude, or another model-based product, inference is happening behind the scenes.

This matters because inference infrastructure has different requirements than training infrastructure. It often does not need the same highly specialized cluster setup. Models can also be compressed or optimized so they run on smaller hardware footprints. That creates room for more flexible, distributed infrastructure options.

For a company like Vast.ai, that difference is the opening. If the market needs more places to run models efficiently, then a marketplace for GPU access becomes much more valuable.

The Moment Vast.ai Hit Hypergrowth

Vast.ai launched in 2018, but the recent acceleration has been dramatic.

The business had already been growing on a strong long-term trajectory, roughly doubling over time. Then the pace picked up sharply. By late 2024 and early 2025, the company started seeing signs that demand was moving into another gear.

Part of that seems tied to better models and better tooling arriving at the same time. As coding tools, model capabilities, and agent-like workflows improve, more people suddenly have reasons to rent GPUs. Some are experimenting. Some are fine-tuning. Some want privacy. Others are building image and video products that require much more compute than text alone.

One interesting detail is how global the demand is. North America is not even the biggest market for Vast.ai. The platform is seeing worldwide interest, especially from developers and AI teams looking for accessible compute without the cost structure of traditional hyperscalers.

That is usually a sign that a trend is real. When demand shows up across geographies and use cases, it is no longer a niche behavior.

Why Teams Choose Vast Over AWS

Cost is a major reason teams choose Vast.ai.

The platform works as a marketplace where hosting partners list their own GPU hardware, set pricing, and define terms. Vast.ai verifies the hardware and performance, then provides the software layer that makes it usable.

Because Vast.ai does not own the underlying equipment, it can operate with a lighter model and often offer lower prices for comparable GPU access.

That cost advantage matters a lot for AI teams, especially those running inference-heavy workloads where usage can scale quickly. Even small differences in price compound fast when compute is a core operating expense.

But price is only part of the story. Teams also want flexibility. Some need short-term experiments. Some want interruptible workloads. Some need private deployments. Some want a path into production without being locked into one provider.

Vast.ai sits in that gap between raw infrastructure and easy access. It gives people a way to get started quickly while still offering options across different GPU types and hosts.

Building a Two-Sided GPU Marketplace

The core business model is a two-sided marketplace.

On one side are people and organizations that own GPU capacity. Some are small operators with a dozen GPUs. Some are larger data center operators with certifications and production-grade setups. On the other side are developers, researchers, startups, and businesses that need compute.

The appeal for suppliers is straightforward. They can monetize spare capacity without having to do marketing, customer acquisition, or support on their own. They also get control over pricing and rental terms.

The appeal for buyers is just as clear. They get a broad market of options in one place.

What is notable here is where the hard work actually is. Many founders assume the biggest challenge in a marketplace is supply. In practice, the more demanding side can be demand. Buyers show up with expectations shaped by AWS, and many want help fast, even if they are only spending a few dollars.

That creates a support burden that looks more like a software product than a listing site. Users need onboarding help, technical guidance, and reliable service. If the experience breaks down, the marketplace loses trust quickly.

Competing on More Than Just Price

Vast.ai may be known for low-cost GPUs, but the defensibility goes deeper than cheap compute.

The real moat comes from the full product experience. Customer support, host verification, platform reliability, templates, ease of use, and market liquidity all matter.

That is why the company describes itself as a software company. The hard part is not just sourcing hardware. The hard part is building the software layer that makes a fragmented supply base feel easy and trustworthy for buyers.

This is also where competition gets interesting. Many infrastructure players are excellent at data center operations, power procurement, and hardware deployment. Vast.ai’s edge is different. It focuses on the marketplace software, the user experience, and the mechanisms that make supply and demand meet efficiently.

The company is also leaning into pricing transparency. By publishing more pricing data over time, it can become a source of truth for the GPU market itself, which further strengthens its position.

The Real Economics of Hosting GPUs

The economics of supplying GPUs are more complicated than they look.

A host has to think about the upfront cost of the equipment, ongoing power costs, taxes, and future demand. Power is especially important. A supplier with high electricity costs starts at a disadvantage compared with someone operating in Texas, Iceland, or another low-cost region.

The type of GPU matters too. Different chips have different power profiles, and enterprise cards can look very different from consumer cards when you run the numbers.

Then there is timing. GPU prices are notoriously hard to predict because demand can change direction quickly. Crypto once shaped that market. Now AI inference is the much bigger force. A host can make a smart purchase at one moment and still get surprised by a sudden shift in pricing or demand later.

In other words, the marketplace provides liquidity and price signals, but suppliers still have to make real operating bets.

The Network Effects Behind Vast.ai

This is where the marketplace model becomes powerful.

As more buyers come to Vast.ai, the platform becomes more attractive to suppliers. As more suppliers list capacity, the platform becomes more useful to buyers. That two-sided loop is the foundation of the growth flywheel.

At scale, it creates a meaningful moat.

A company that becomes the default place to shop for GPUs gains consumer pull. Suppliers know demand is there, so they want to list on the platform. Buyers know supply is there, so they start there first.

There is another layer too. Marketplace competition among suppliers improves pricing and availability for buyers. Over time, the platform can also become the best place to understand market conditions. If people are already using Vast.ai pricing data to make decisions, that informational advantage strengthens the network even further.

The long-term roadmap adds more depth here. Features like bids, future contracts, and better handling of bare metal or cluster rentals could make the marketplace more dynamic and more sophisticated over time.

Building a Lean Team During Hypergrowth

One of the more surprising parts of the story is how lean the company has stayed.

Vast.ai has around 44 people, with about 10 in technical support and the rest concentrated heavily in engineering. Leadership remains very hands-on, and the team has leaned into an in-office model in Los Angeles and San Francisco to increase collaboration during a fast-moving period.

That choice stands out in a market where many companies stayed remote. But for a business moving quickly through a shifting infrastructure landscape, the office seems to have helped tighten feedback loops and execution speed.

The company has also stayed disciplined about where headcount goes. Instead of building a large go-to-market organization early, it kept the team engineering-heavy and operationally lean.

Why AI Changed Their Hiring Strategy

The most forward-looking part of the conversation was how AI is changing internal hiring decisions.

Despite strong growth, Vast.ai has paused hiring for now.

That sounds counterintuitive until you look at what AI is doing to engineering work. Code generation is accelerating so much that traditional development workflows are changing. Tasks that used to move through long implementation stages can now jump much faster into review, testing, and deployment.

As a result, the value of new hires is shifting. The company is placing more weight on systems thinking, technical design, infrastructure understanding, and the ability to manage increasingly AI-assisted workflows.

That is a useful signal for other founders. In an AI-native company, hiring more people is no longer the automatic answer to growth. The better question is where human judgment still creates the most leverage.

For AI infrastructure companies especially, that seems to be moving toward architecture, security, orchestration, and operational decision-making.

The bigger takeaway is simple: inference is becoming one of the core engines of the AI economy, and the companies that make inference cheaper, easier, and more flexible are in a strong position to grow.

Vast.ai is proving that you do not need to own all the hardware to win in this market. But you do need excellent software, strong marketplace dynamics, and a clear view of where the demand curve is going next.

Resources

🚀 Vast.ai: Marketplace for low-cost GPUs: https://vast.ai

💼 Connect with Travis Cannell on LinkedIn: https://www.linkedin.com/in/traviscannell/

💼 Connect with Wes Bush on LinkedIn: https://www.linkedin.com/in/wesbush/

💼 Connect with Esben Friis-Jensen on LinkedIn: https://www.linkedin.com/in/esbenfriisjensen/

🧠 Sign up for the ProductLed Newsletter: https://www.productled.com/newsletter

Want to Build Your Own Product-Led Success Story?

Vast.ai’s growth is a strong reminder that breakout companies are rarely built on one big advantage alone. They win by getting closer to customer demand, shortening the path to value, and building systems that let quality scale without constant oversight.

That is what product-led growth looks like in practice.

If you want help turning those ideas into a practical growth plan for your business, here are a few ways to go deeper:

- Book a Free Growth Session for personalized advice on your biggest PLG challenges

- Join the ProductLed MBA™ to learn the frameworks top product-led companies use to scale

- Download the ProductLed Playbook for free resources packed with PLG strategies

- Subscribe to the newsletter for weekly insights on building and scaling product-led growth